See also: Video was first developed for systems, which were quickly replaced by (CRT) systems, but several new technologies for video have since been invented. Video was originally exclusively a technology. Led an research team developing one of the first practical (VTR). In 1951 the first video tape recorder captured live images from by converting the camera's electrical impulses and saving the information onto magnetic.

Video recorders were sold for US $50,000 in 1956, and videotapes cost US $300 per one-hour reel. However, prices gradually dropped over the years; in 1971, Sony began selling (VCR) decks and tapes into the. The use of techniques in video created, which allows higher quality and, eventually, much lower cost than earlier analog technology. After the invention of the in 1997 and in 2006, sales of videotape and recording equipment plummeted. Advances in computer technology allows even inexpensive and to capture, store, edit and transmit digital video, further reducing the cost of video production, allowing program-makers and broadcasters to move to.

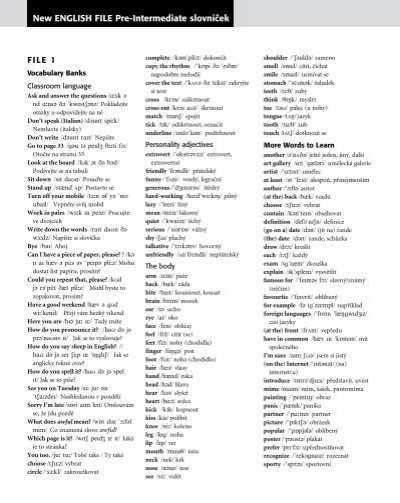

Learn new english file intermediate with free interactive flashcards. Choose from 500 different sets of new english file intermediate flashcards on Quizlet. New English File Pre-Intermediate Unit 1. Get in touch with. Go to bed early. Have sth in common. Last weekend. Get in communication with. Share interests or experiences with sb.

The advent of and the subsequent is in the process of relegating analog video to the status of a in most parts of the world. As of 2015, with the increasing use of high-resolution video cameras with improved and, and high-dynamic-range data formats with improved, modern digital video technology is converging with technology.

Characteristics of video streams Number of frames per second, the number of still pictures per unit of time of video, ranges from six or eight frames per second ( frame/s) for old mechanical cameras to 120 or more frames per second for new professional cameras. Standards (Europe, Asia, Australia, etc.) and (France, Russia, parts of Africa etc.) specify 25 frame/s, while standards (USA, Canada, Japan, etc.) specify 29.97 frame/s. Film is shot at the slower frame rate of 24 frames per second, which slightly complicates the process of transferring a cinematic motion picture to video. The minimum frame rate to achieve a comfortable illusion of a is about sixteen frames per second.

Interlaced vs progressive Video can be. In progressive scan systems, each refresh period updates all scan lines in each frame in sequence.

When displaying a natively progressive broadcast or recorded signal, the result is optimum spatial resolution of both the stationary and moving parts of the image. Interlacing was invented as a way to reduce flicker in early and video displays without increasing the number of complete. Interlacing retains detail while requiring lower compared to progressive scanning. In interlaced video, the horizontal of each complete frame are treated as if numbered consecutively, and captured as two fields: an odd field (upper field) consisting of the odd-numbered lines and an even field (lower field) consisting of the even-numbered lines.

Analog display devices reproduce each frame, effectively doubling the frame rate as far as perceptible overall flicker is concerned. When the image capture device acquires the fields one at a time, rather than dividing up a complete frame after it is captured, the frame rate for motion is effectively doubled as well, resulting in smoother, more lifelike reproduction of rapidly moving parts of the image when viewed on an interlaced CRT display. NTSC, PAL and SECAM are interlaced formats. Abbreviated video resolution specifications often include an i to indicate interlacing. For example, PAL video format is often described as 576i50, where 576 indicates the total number of horizontal scan lines, i indicates interlacing, and 50 indicates 50 fields (half-frames) per second. When displaying a natively interlaced signal on a progressive scan device, overall spatial resolution is degraded by simple —artifacts such as flickering or 'comb' effects in moving parts of the image which appear unless special signal processing eliminates them. A procedure known as can optimize the display of an interlaced video signal from an analog, DVD or satellite source on a progressive scan device such as an, digital video projector or plasma panel.

Deinterlacing cannot, however, produce that is equivalent to true progressive scan source material. Aspect ratio. Comparison of common and traditional (green) aspect ratios describes the proportional relationship between the width and height of video screens and video picture elements. All popular video formats are, and so can be described by a ratio between width and height. The ratio width to height for a traditional television screen is 4:3, or about 1.33:1. High definition televisions use an aspect ratio of 16:9, or about 1.78:1.

The aspect ratio of a full 35 mm film frame with soundtrack (also known as the ) is 1.375:1. On computer monitors are usually square, but pixels used in often have non-square aspect ratios, such as those used in the PAL and NTSC variants of the digital video standard, and the corresponding anamorphic widescreen formats. The raster uses thin pixels on a 4:3 aspect ratio display and fat pixels on a 16:9 display. The popularity of viewing video on mobile phones has led to the growth of. Mary Meeker, a partner at Silicon Valley venture capital firm, highlighted the growth of vertical video viewing in her 2015 Internet Trends Report – growing from 5% of video viewing in 2010 to 29% in 2015. Vertical video ads like ’s are watched in their entirety nine times more frequently than landscape video ads. Color model and depth.

Example of U-V color plane, Y value=0.5 The the video color representation and maps encoded color values to visible colors reproduced by the system. There are several such representations in common use: is used in NTSC television, is used in PAL television, is used by SECAM television and is used for digital video. The number of distinct colors a pixel can represent depends on expressed in the number of bits per pixel. A common way to reduce the amount of data required in digital video is by (e.g., 4:4:4, 4:2:2, etc.). Because the human eye is less sensitive to details in color than brightness, the luminance data for all pixels is maintained, while the chrominance data is averaged for a number of pixels in a block and that same value is used for all of them. For example, this results in a 50% reduction in chrominance data using 2 pixel blocks (4:2:2) or 75% using 4 pixel blocks (4:2:0). This process does not reduce the number of possible color values that can be displayed, but it reduces the number of distinct points at which the color changes.

Video quality can be measured with formal metrics like (PSNR) or through assessment using expert observation. Many subjective video quality methods are described in the recommendation BT.500. One of the standardized method is the Double Stimulus Impairment Scale (DSIS). In DSIS, each expert views an unimpaired reference video followed by an impaired version of the same video. The expert then rates the impaired video using a scale ranging from 'impairments are imperceptible' to 'impairments are very annoying'. Video compression method (digital only). Main article: delivers maximum quality, but with a very high.

A variety of methods are used to compress video streams, with the most effective ones using a (GOP) to reduce spatial and temporal. Broadly speaking, spatial redundancy is reduced by registering differences between parts of a single frame; this task is known as compression and is closely related to. Likewise, temporal redundancy can be reduced by registering differences between frames; this task is known as compression, including and other techniques. The most common modern compression standards are, used for, and, and, used for, Mobile phones (3GP) and Internet. Stereoscopic video for and other applications can be displayed using several different methods:. Two channels: a right channel for the right eye and a left channel for the left eye.

Both channels may be viewed simultaneously by using 90 degrees off-axis from each other on two video projectors. These separately polarized channels are viewed wearing eyeglasses with matching polarization filters. where one channel is overlaid with two color-coded layers. This left and right layer technique is occasionally used for network broadcast, or recent anaglyph releases of 3D movies on DVD.

Simple red/cyan plastic glasses provide the means to view the images discretely to form a stereoscopic view of the content. One channel with alternating left and right frames for the corresponding eye, using that synchronize to the video to alternately block the image to each eye, so the appropriate eye sees the correct frame.

This method is most common in computer applications such as in a, but reduces effective video framerate by a factor of two. Formats Different layers of video transmission and storage each provide their own set of formats to choose from. For transmission, there is a physical connector and signal protocol (see ). A given physical link can carry certain that specify a particular refresh rate,.

Many analog and digital are in use, and digital can also be stored on a as files, which have their own formats. In addition to the physical format used by the or transmission medium, the stream of ones and zeros that is sent must be in a particular digital, of which a number are available (see ). Analog video Analog video is a video signal transferred by an. An analog color video signal contains, brightness (Y) and (C) of an image.

When combined into one channel, it is called as is the case, among others with,. Analog video may be carried in separate channels, as in two channel (YC) and multi-channel formats. Analog video is used in both consumer and professional applications.

Transport medium Video can be transmitted or transported in a variety of ways. Wireless broadcast as an analog or digital signal.

Coaxial cable in a closed circuit system can be sent as analog interlaced 1 volt peak to peak with a maximum horizontal line resolution up to 480. Broadcast or studio cameras use a single or dual coaxial cable system using a progressive scan format known as SDI serial digital interface and HD-SDI for High Definition video. The distances of transmission are somewhat limited depending on the manufacturer the format may be proprietary. SDI has a negligible lag and is uncompressed. There are initiatives to use the SDI standards in closed circuit surveillance systems, for Higher Definition images, over longer distances on coax or twisted pair cable.

Due to the nature of the higher bandwidth needed, the distance the signal can be effectively sent is a half to a third of what the older interlaced analog systems supported. Connectors, cables, and signal standards. See for information about physical connectors and related signal standards. Display standards.

Further information: broadcast standards include:. – US, Russia; obsolete.

– Europe; obsolete. – Japan.

–,. –,.

– PAL variation.,. – PAL extension,. (military).

–, former, An analog video format consists of more information than the visible content of the frame. Preceding and following the image are lines and pixels containing synchronization information or a time delay. This surrounding margin is known as a blanking interval or blanking region; the horizontal and vertical and are the building blocks of the blanking interval. Computer displays See for a list of standards used for computer monitors and comparison with those used for television. Recording formats before video tape. Analog tape formats. From the original on 2017-05-14.

Retrieved 2017-03-30. Elen, Richard. From the original on 2011-10-27. Rewind Museum. From the original on 22 February 2014. Retrieved 21 February 2014. Soseman, Ned.

Archived from on 29 June 2017. Retrieved 12 July 2017. Watson (1986). Sensory Processes and Perception. Archived from (PDF) on 2016-03-08. Constine, Josh (May 27, 2015).

From the original on August 4, 2015. Retrieved August 6, 2015. Archived from on 12 December 2013. Retrieved 21 February 2014. (PDF) from the original on 6 March 2015. Retrieved 16 November 2014. External links Wikimedia Commons has media related to.

About Video. at. at.

In choosing which pre-intermediate level textbook for students to use in my English language classes, I chose the New English File Pre-Intermediate Student's Book and have found it to be very appropriate. Students who have their own copy in a class can participate in the lessons, which are each well-structured with a main topic on which the exercises are based. The lessons differ in emphasis on functions in exercises (e.g. Pronunciation, reading and speaking), although all lessons have grammar exercises. As some classroom exercises involve listening to entertaining passages / dialogues and answering questions about details mentioned, it is ideal to purchase the classroom CD as well, although the texts are provided at the back of the book and could be read orally. Other factors (time available for course, price of books, photocopiable exercises from teacher's book, exercises for each lesson on OUP website, etc.) led to my choosing the student's book instead of the multipack book.

Phonetics are used to teach pronunciation. Regular tests for specific stages of the syllabus can be found on the CD in the Teacher's Book, which is useful but has to be purchased separately. In choosing which pre-intermediate level textbook for students to use in my English language classes, I chose the New English File Pre-Intermediate Student's Book and have found it to be very appropriate. Students who have their own copy in a class can participate in the lessons, which are each well-structured with a main topic on which the exercises are based. The lessons differ in emphasis on functions in exercises (e.g.

Pronunciation, reading and speaking), although all lessons have grammar exercises. As some classroom exercises involve listening to entertaining passages / dialogues and answering questions about details mentioned, it is ideal to purchase the classroom CD as well, although the texts are provided at the back of the book and could be read orally. Other factors (time available for course, price of books, photocopiable exercises from teacher's book, exercises for each lesson on OUP website, etc.) led to my choosing the student's book instead of the multipack book. Phonetics are used to teach pronunciation.

Regular tests for specific stages of the syllabus can be found on the CD in the Teacher's Book, which is useful but has to be purchased separately.